NH_Wood said:

SolarAndWood said:

So, very little difference between 30 and 20, just a PIA to burn?

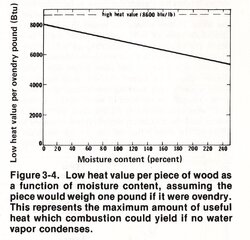

That's what I noticed as well - seems like only ~ 300 BTU (perhaps less) difference between 20 and 30%. But, perhaps the difference in usable heat from those BTU's is what matters. Even though BTU's are similar, is a larger percentage of BTU's going toward liberating the water, rather than heating the stove at 30% vs. 20%. With water's high specific heat, I wonder if linear jumps in water content translate to positive, non-linear changes in the relative amount of BTU's that go toward liberating the water. Cheers!

The specific heat of water isn't really relevant to the calculations used in general estimations of heat output because you never know ahead of time exactly how warm the split will be until you put it into the stove. You could need to raise it (and the contained water) 175º in one case but only 100º in another case. But what

never changes is the amount of heat that is needed to make the water change from the liquid phase at 212º to the gaseous phase at 212º. It's called the heat of vaporization, and for water, it is always about 970 BTUs per pound of water. That is the only heat loss the chart in showing.

But the big picture is much larger and more complicated. More air is needed to burn wetter wood, so more heat goes up the flue. Wetter wood can actually burn more efficiently than drier wood because the outgassing is slower and more controlled, so you gain some back in that way. And lots of other minor factors make overall efficiency better or worse depending on the burn rate and the moisture content.

It's a serious mistake to assume you will get more and more heat in the home just by using drier and drier wood, or that you will automatically get cleaner burns with wood that is extremely dry. The opposite is usually true. The one thing that can't be denied is that wet wood is

always a PITA to burn, even if you end up getting more total heat from it. You need to babysit a stove a bit to get good heat out of wet wood, and most folks don't have the time, nor do they care to waste it in that manner.

I posted this set of interrelated graphs in another thread as well, but it really tells the story fairly well. It is for a typical non-EPA air-tight stove with a constant power output of 17,000 BTU/hr.

Combustion efficiency is highest at from 25-30% MC, but heat transfer drops dramatically as the wood gets wetter. Overall efficiency combines both of these effects, and is highest at 20% MC, but drops off as the wood gets either wetter or drier. But isn't 20% MC the magic number for the best burn?

Things will chart out a little differently in a modern stove. The basic shapes of the curves won't be that far off with a modern stove, but the peaks will likely be at different MCs than the ones shown here. I would expect that a similar test would show that peak overall efficiency occurs around 18% MC and then drops off in either direction from there, but that's just a SWAG. My reasoning is that modern stoves handle outgassing smoke better by generating higher internal temperatures and by introducing secondary air downstream of the main combustion zone. Either that, or they use a catalyst to help combine the extra smoke at lower temperatures to the same effect.

In every single case, there will be an ideal MC for each situation You'll have to discover through experimentation what that ideal MC is for your set of variables, but it will never be true that the drier the wood gets, the more heat you will get in the room.